Resources to Improve Your Security

blog post

Long-distance Connections

GoodSync Connect allows devices that typically wouldn’t be able to directly communication with one another to overcome that communication barrier.

Read the Article

video

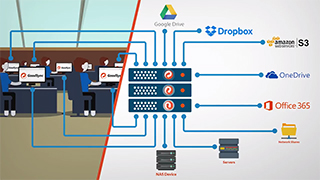

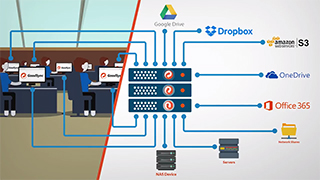

GoodSync for Servers

Backup and Synchronization service for Windows and UNIX/Linux Server Operating Systems. Unattended service, multi-destination and automation support.

Watch the Video

white paper

Backup Demystified

Data backup strategies are an integral part of data loss prevention and business continuity. GoodSync provides the flexibility of multiple data strategies ensuring the secure flow of mission critical data at all times.

Download the White Paper

Blog Posts

blog post

Long-distance Connections

GoodSync Connect allows devices that typically wouldn’t be able to directly communication with one another to overcome that communication barrier.

Read the Article

blog post

Keep Amazon S3 Data Private with GoodSync

Amazon advises using a third-party tool to provide client-side encryption.

Read the Article

blog post

Protect Your Personal Data in the Cloud with Encryption by GoodSync

If you are using one of the popular cloud file storages, companies that operate these services can obtain access to your data. They can analyze your data. They can share your data, and you often don’t have control over who sees your data.

Read the Article

blog post

Full and Incremental Backup with GoodSync

Implementing a full or incremental backup is easy with GoodSync.

Read the Article

blog post

GoodSync GDPR Compliance Statement

Siber Systems Inc. has a consistent level of data protection and security across our organization, that fully complies with all GDPR provisions.

Read the Article

blog post

GoodSync GDPR Compliance Progress Statement

In 2016, the EU adopted General Data Protection Regulation (“GDPR”). The GDPR is now recognized as law across the EU. GDPR enforcement begins on 25th May 2018.

Read the Article

Videos

video

GoodSync for Servers

Backup and Synchronization service for Windows and UNIX/Linux Server Operating Systems. Unattended service, multi-destination and automation support.

Watch the Video

White Papers

white paper

Backup Demystified

Data backup strategies are an integral part of data loss prevention and business continuity. GoodSync provides the flexibility of multiple data strategies ensuring the secure flow of mission critical data at all times.

Download the White Paper

white paper

Synchronization Demystified

Effective data synchronization is essential to the lifeline of any modern business. GoodSync ensures uninterrupted and high availability of mission critical data between servers, local and remote workstations, cloud storage accounts, and NAS devices.

Download the White Paper

white paper

GoodSync Enterprise

The Ideal Solution For Corporate File Synchronization and Backup

The ideal data plan should be safe, flexible, dependable, and cost-effective. GoodSync Enterprise meets all necessary criteria and exceeds a wide range of alternative options, acting as a unique and versatile backup and synchronization solution. It’s perfect for small businesses, larger corporations, and government entities.

Download the White Paper

white paper

GoodSync Control Center

Data and Security

GoodSync Control Center provides centralized management over data backup and synchroinization jobs. All communication is performed securely and security systems and protocols are tested regularly to ensure exceptional response rates.

Download the White Paper